It’s no secret the AI accelerator business is hot today, with semiconductor manufacturers spinning up neural processing units, and the AI PC initiative driving more powerful processors into laptops, desktops and workstations.

Gartner studied the AI chip industry and found that, in 2024, worldwide AI chip revenue is predicted to grow by 33%. Specifically, the Gartner report “Forecast Analysis: AI Semiconductors, Worldwide” detailed competition between hyperscalers (some of whom are developing their own chips and calling on semiconductor vendors), the use cases for AI chips, and the demand for on-chip AI accelerators.

“Longer term, AI-based applications will move out of data centers into PCs, smartphones, edge and endpoint devices,” wrote Gartner analyst Alan Priestley in the report.

Where are all these AI chips going?

Gartner predicted total AI chips revenue in 2024 to be $71.3 billion (up from $53.7 billion in 2023) and increasing to $92 billion in 2025. Of total AI chips revenue, computer electronics will likely account for $33.4 billion in 2024, or 47% of all AI chips revenue. Other sources for AI chips revenue will be automotive electronics ($7.1 billion) and consumer electronics ($1.8 billion).

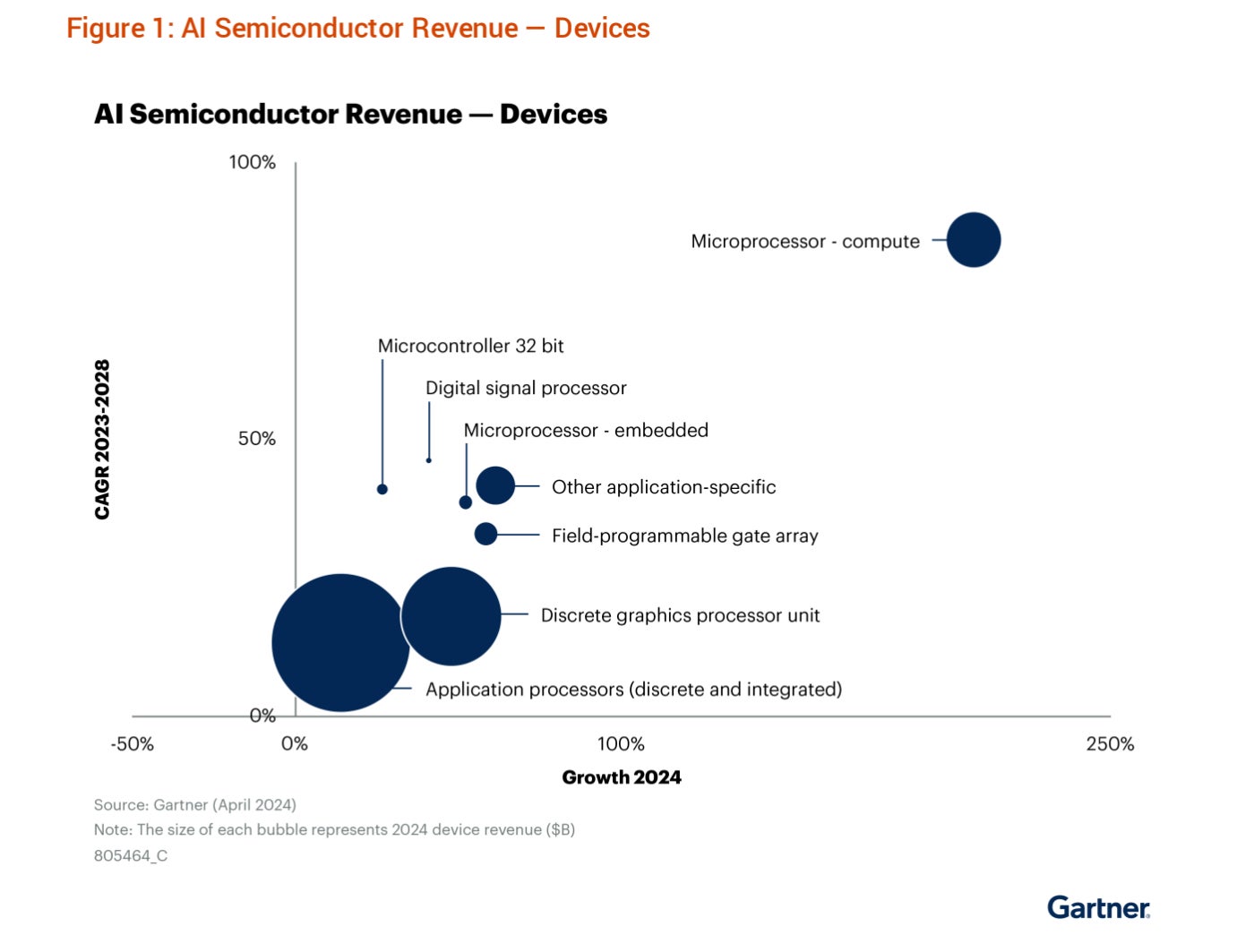

Of the $71.3 billion in AI semiconductor revenue in 2024, most will come from discrete and integrated application processes, discrete GPUs and microprocessors for compute, as opposed to embedded microprocessors.

In terms of AI semiconductor revenue from applications in 2024, most will come from compute electronics devices, wired communications electronics and automotive electronics.

Gartner noticed a shift in compute needs from initial AI model training to inference, which is the process of refining everything the AI model has learned in training. Gartner predicted more than 80% of workload accelerators deployed in data centers will be used to execute AI inference workloads by 2028, an increase of 40% from 2023.

SEE: Microsoft’s new category of PCs, Copilot+, will use Qualcomm processors to run AI on-device.

AI and workload accelerators walk hand-in-hand

AI accelerators in servers will be a $21 billion industry in 2024, Gartner predicted.

“Today, generative AI (GenAI) is fueling demand for high-performance AI chips in data centers. In 2024, the value of AI accelerators used in servers, which offload data processing from microprocessors, will total $21 billion, and increase to $33 billion by 2028,” said Priestley in a press release.

AI workloads will require beefing up standard microprocessing units, too, Gartner predicted.

“Many of these AI-enabled applications can be executed on standard microprocessing units (MPUs), and MPU vendors are extending their processor architectures with dedicated on-chip AI accelerators to better handle these processing tasks,” wrote Priestley in a May 4 forecast analysis of AI semiconductors worldwide.

In addition, the rise of AI techniques in data center applications will drive demand for workload accelerators, with 25% of new servers predicted to have workload accelerators in 2028, compared to 10% in 2023.

The dawn of the AI PC?

Gartner is bullish about AI PCs, the push to run large language models locally in the background on laptops, workstations and desktops. Gartner defines AI PCs as having a neural processing unit that lets people use AI for “everyday activities.”

The analyst firm predicted that, by 2026, every enterprise PC purchase will be an AI PC. Whether this turns out to be true is as yet unknown, but hyperscalers are certainly building AI into their next-generation devices.

AI among hyperscalers encourages both competition and collaboration

AWS, Google, Meta and Microsoft are pursuing in-house AI chips today, while also seeking hardware from NVIDIA, AMD, Qualcomm, IBM, Intel and more. For example, Dell announced a selection of new laptops that use Qualcomm’s Snapdragon X Series processor to run AI, while both Microsoft and Apple pursue adding OpenAI products to their hardware. Gartner expects the trend of developing custom-designed AI chips to continue.

Hyperscalers are designing their own chips in order to have a better control of their product roadmaps, control cost, reduce their reliance on off-the-shelf chips, leverage IP synergies and optimize performance for their specific workloads, said Gartner analyst Gaurav Gupta.

“Semiconductor chip foundries, such as TSMC and Samsung, have given tech companies access to cutting-edge manufacturing processes,” Gupta said.

At the same time, “Arm and other firms, like Synopsys have provided access to advanced intellectual property that makes custom chip design relatively easy,” he said. Easy access to the cloud and a changing culture of semiconductor assembly and test service (SATS) providers have also made it easier for hyperscalers to get into designing chips.

“While chip development is expensive, using custom designed chips can improve operational efficiencies, reduce the costs of delivering AI-based services to users, and lower costs for users to access new AI-based applications,” Gartner wrote in a press release.

Source of Article