These are unprecedented times, at least by information age standards. Much of the U.S. economy has ground to a halt, and social norms about our data and our privacy have been thrown out the window throughout much of the world. Moreover, things seem likely to keep changing until a vaccine or effective treatment for COVID-19 becomes available. All this change could wreak havoc on artificial intelligence (AI) systems. Garbage in, garbage out still holds in 2020. The most common types of AI systems are still only as good as their training data. If there’s no historical data that mirrors our current situation, we can expect our AI systems to falter, if not fail.

To date, at least 1,200 reports of AI incidents have been recorded in various public and research databases. That means that now is the time to start planning for AI incident response, or how organizations react when things go wrong with their AI systems. While incident response is a field that’s well developed in the traditional cybersecurity world, it has no clear analogue in the world of AI. What is an incident when it comes to an AI system? When does AI create liability that organizations need to respond to? This article answers these questions, based on our combined experience as both a lawyer and a data scientist responding to cybersecurity incidents, crafting legal frameworks to manage the risks of AI, and building sophisticated interpretable models to mitigate risk. Our aim is to help explain when and why AI creates liability for the organizations that employ it, and to outline how organizations should react when their AI causes major problems.

AI Is Different—Here’s Why

Before we get into the details of AI incident response, it’s worth raising these baseline questions: What makes AI different from traditional software systems? Why even think about incident response differently in the world of AI? The answers boil down to three major reasons, which may also exist in other large software systems but are exacerbated in AI. First and foremost is the tendency for AI to decay over time. Second is AI’s tremendous complexity. And last is the probabilistic nature of statistics and machine learning (ML).

Most AI models decay overtime: This phenomenon, known more widely as model decay, refers to the declining quality of AI system results over time, as patterns in new data drift away from patterns learned in training data. This implies that even if the underlying code is perfectly maintained in an AI system, the accuracy of its output is likely to decrease. As a result, the probability of an AI incident often increases over time.1 And, of course, the risks of model decay are exacerbated in times of rapid change.

AI systems are more complex than traditional software: The complexity of most AI systems is greater on a near-exponential level than that of traditional software systems. If “[t]he worst enemy of security is complexity,” to quote Bruce Schneier, AI is in many ways inherently insecure. In the context of AI incidents, this complexity is problematic because it can make audits, debugging, and simply even understanding what went wrong nearly impossible.2

Because statistics: Last is the inherently probabilistic nature of ML. All predictive models are wrong at times—just hopefully less so than humans. As the renowned statistician George Box once quipped, “All models are wrong, but some are useful.” But unlike traditional software, where wrong results are often considered bugs, wrong results in ML are expected features of these systems. This means organizations should always be ready for their ML systems to fail in ways large and small—or they might find themselves in the midst of an incident they’re not prepared to handle.

Taken together, AI is a high-risk technology, perhaps akin today to commercial aviation or nuclear power. It can provide substantial benefits, but even with diligent governance, it’s still likely to cause incidents—with or without external attackers.

Defining an “AI Incident”

In standard software programming, incidents generally require some form of an attacker.

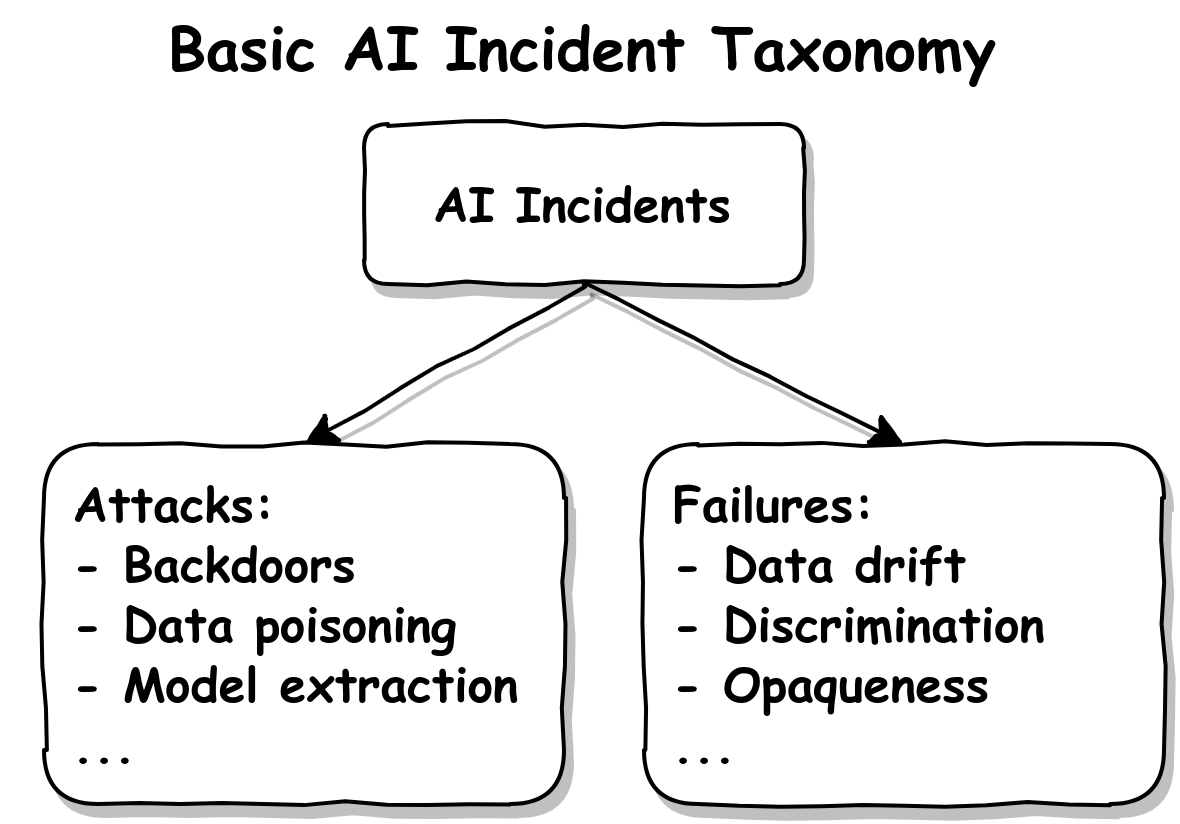

A basic taxonomy that divides AI incidents into malicious attacks and failures. Failures can be caused by accidents, negligence, or unforeseeable external circumstances.

But incidents in AI systems are different. An AI incident should be considered any behavior by the model with the potential to cause harm, expected or not. This includes potential violations of privacy and security, like an external attacker attempting to manipulate the model or steal data encoded in the model. But this also includes incorrect predictions, which can cause enormous harm if left unaddressed and unaccounted for. AI incidents, in other words, don’t require an external attacker. The likelihood of AI system failures makes AI high-risk in and of itself—and especially if not monitored correctly.3

This framework is certainly broad—indeed, it’s aligned with how an unmonitored AI system practically guarantees incidents.4 But is it too broad to be useful? Quite the contrary. At a time when organizations rely on increasingly complex software systems (both AI related and not), deployed in ever-changing environments, security efforts cannot stop all incidents from occurring altogether. Instead, organizations must acknowledge that incidents will occur, perhaps even many of them. And that means that what counts as an incident ends up being just as important as how organizations respond when they do occur.

Understanding where AI is creating harms and when incidents are actually occurring is therefore only the first step. The next step lies in determining when and how to respond. We suggest considering two major factors: preparation and materiality.

Gauging Severity Based on Preparedness

The first factor in deciding when and how to respond to AI incidents is preparedness, or how much the organization has anticipated and mitigated the potential harms caused by the incident in advance.

For AI systems, it is possible to prepare for incidents before they occur, and even to automate many of the processes that make up key phases of incident response. Take, for example, a medical image classification model used to detect malign tumors. If this model begins to make dangerous and incorrect predictions, preparation can make the difference between a full-blown incident and a manageable deviation in model behavior.

In general, allowing users to appeal decisions or operators to flag suspicious model behavior, along with built-in redundancy and rigorous model monitoring and auditing programs, can help organizations recognize potentially harmful behavior in near-real time. If our model generates false negative predictions for tumor detection, organizations could combine automated imaging results with activities like follow up radiologist reviews or blood tests to catch any potentially incorrect predictions—and even improve the accuracy of the combined human and machine efforts.5

How prepared you are, in other words, can help to determine the severity of the incident, the speed at which you must respond, and the resources your organization should devote to its response. Organizations that have anticipated the harms of any given incident and minimized its impact may only need to carry out minimal response activities. Organizations that are caught off guard, however, may need to devote significantly more resources to understanding what went wrong, what its impact could be, and only then engage in recovery efforts.

How Material Is the Threat?

Materiality is a widely used concept in the world of model risk management, a regulatory field that governs how financial institutions document, test, and monitor the models they deploy. Broadly speaking, materiality is the product of the impact of a model error times the probability of that error occuring. Materiality relates to both the scale of the harm and the likelihood that the harm will take place. If the probability is high that our hypothetical image classification model will fail to identify malign tumors, and if the impact of this failure could lead to undiagnosed illness and to loss of life for patients, the materiality for this model would be high. If, however, the impact of this type of failure was diminished–by, for example, the model being used as one of several overlapping diagnostic tools–materiality would decrease.

Data sensitivity also tends to be a helpful measure for the materiality of any incident. From a data privacy perspective, sensitive data–like consumer financials or data relating to health, ethnicity, sexual orientation, or gender–tend to carry higher risk and therefore a greater potential for liability and harm. Additional real-world considerations for increased materiality also include threats to health, safety, and third parties, legal liabilities, and reputational damage.

Which brings us to a point that many may find unfamiliar: it’s never too early to get legal and compliance personnel involved in an AI project.

It’s All Fun and Games—Until the Lawsuits

Why involve lawyers in AI? The most obvious reason is that AI incidents can give rise to serious legal liability, and liability is always an inherently legal problem. The so-called AI transparency paradox, under which all data creates new risks, forms another general reason why lawyers and legal privilege are so important in the world of data science—indeed, this is why legal privilege already functions as a central factor in the world of traditional incident response. What’s more, existing laws propose standards that AI incidents can run afoul of. Without understanding how these laws affect each incident, organizations can steer themselves into a world of trouble, from litigation to regulatory fines, to denial of insurance coverage after an incident.

Take, for example, the Federal Trade Commission’s (FTC) reasonable security standard, which the FTC uses to assign liability to companies in the aftermath of breaches and attacks. Companies that fail to meet this standard can be on the hook for hundreds of millions of dollars following an incident. Earlier this month, the FTC even published specific guidelines related to AI, hinting at enforcement actions to come. Additionally, there are a host of breach reporting laws, at both the state and the federal level, that mandate reporting to regulators or to consumers after experiencing specific types of privacy or security problems. Fines for violating these requirements can be astronomical, and some AI incidents related to privacy and security may trigger these requirements.

And that’s just related to existing laws on the books. A variety of new and proposed laws at the state, federal, and international level are focused on AI explicitly, which will likely increase the compliance risks of AI over time. The Algorithmic Accountability Act, for example, was introduced in both chambers of Congress last year as one way to increase regulatory oversight over AI. Many more such proposals are on their way.6

Getting Started

So what can organizations do to prepare for the risks of AI? How can they implement plans to manage AI incidents? The answers will vary across organizations—depending on the size, sector, and maturity of their existing AI governance programs. But a few general takeaways can serve as a starting point for AI incident response.

Response Begins with Planning

Incident response requires planning: who responds when an incident occurs, how they communicate to business units and to management, what they do, and more. Without clear plans in place, it is incredibly hard for organizations to identify, little less contain, all the harms AI is capable of generating. That means that to begin with, organizations should have clear plans to identify the personnel capable of responding to AI incidents, and outline their expected behavior when incidents do occur. Drafting these types of plans is a complex endeavor, but there are a variety of existing tools and frameworks. NIST’s Computer Security Incident Handling Guide which, while not tailored to the risks of AI specifically, provides one good starting point.

Beyond planning, organizations don’t actually need to wait until incidents occur to mitigate their impact—indeed, there are a host of best practices they can implement long before any incidents take place. Organizations should, among other best practices:

Keep an up-to-date inventory of all AI systems: This allows organizations to form a baseline understanding of where potential incidents could occur.

Monitor all AI systems for anomalous behavior: Proper monitoring ends up being central to both incident detection and to ensure a full recovery during the latter stages of the response.

Standup AI-specific preventive security measures: Activities like red-teaming or bounty programs can help to identify potential problems long before they cause full-blown incidents.

Thoroughly document all AI and ML systems: Along with pertinent technical and personnel information, documentation should include expected normal behavior for a system and the business impact of shutting down a system.

Transparency Is Key

Beyond these best practices, it’s also important to emphasize AI interpretability—both in creating accurate and trustworthy models, and also as a central feature in the ability to successfully respond to AI incidents. (We’re such proponents of interpretability that one of us even wrote an e-book on the subject.) From an incident response perspective, transparency turns out to be a core requirement in every stage of incident response. You can’t clearly identify an incident, for example, if you can’t understand how the model is making its decisions. Nor can you contain or remediate errors without insight into the inner-workings of the AI. There are a variety of methods organizations can use to prioritize transparency and to manage interpretability concerns, from inherently interpretable and accurate models, like GA2M, to new research on post-hoc explanations for black-box models.

Participate in Nascent AI Security Efforts

Broader endeavors to enable trustworthy AI are also underway throughout the world, and organizations can connect their own AI incident response efforts to these larger programs in a variety of ways. One international group of researchers, for example, just released a series of guidelines that include ways to report AI incidents to improve collective defenses. Although a host of potential liabilities and barriers may make this type of public reporting difficult, organizations should, where feasible, consider reporting AI incidents for the benefit of broader AI security efforts. Just like the common vulnerabilities and exposures database is central to the world of traditional information security, collective information sharing is critical to the safe adoption of AI.

The Biggest Takeaway: Don’t Wait Until It’s Too Late

Once called “the high interest credit card of technical debt,” AI carries with it a world of exciting new opportunities, but also risks that challenge traditional notions of accuracy, privacy, security, and fairness. The better prepared organizations are to respond when those risks become incidents, the more value they’ll be able to draw from the technology.

————————————————————————————

1 The sub-discipline of adaptive learning attempts to address this problem with systems that can update themselves. But as illustrated by Microsoft’s notorious Tay chatbot, such systems can present even greater risks than model decay.

2 New branches of ML research have provided some antidotes to the complexity created by many ML algorithms. But many organizations are still in the early phases of adopting ML and AI technologies, and seem unaware of recent progress in interpretable ML and explainable AI. Tensorflow, for example, has 140,000+ stars on Github, while DeepExplain has 400+ stars.

3 This framework is also explicitly aligned with how a group of AI researchers recently defined AI incidents, which they described as “cases of undesired or unexpected behavior by an AI system that causes or could cause harm.”

4 In a recent paper about AI accountability, researchers noted that, “complex systems tend to drift toward unsafe conditions unless constant vigilance is maintained. It is the sum of the tiny probabilities of individual events that matters in complex systems—if this grows without bound, the probability of catastrophe goes to one.”

5 This hypothetical example is inspired by a very similar real-world problem. Researchers recently reported on a certain tumor model for which, “overall performance … may be high, but the model still consistently misses a rare but aggressive cancer subtype.”

6 Governments of at least Canada, Germany, Netherlands, Singapore, the U.K. and the U.S. (the White House, DoD, and FDA) have proposed or enacted AI-specific guidance.

Source of Article